The 4 ‘next big things’ to revolutionise retail

Graphene is a ‘wonder molecule’ composed entirely of carbon atoms… Extracted from graphite, the material used in pencils, its unique composition gives it remarkable properties. It conducts electricity better than copper; it is stronger but lighter than

Graphene is a ‘wonder molecule’ composed entirely of carbon atoms…

Extracted from graphite, the material used in pencils, its unique composition gives it remarkable properties.

It conducts electricity better than copper; it is stronger but lighter than steel; and it is impermeable to gases. Its benefits are seemingly endless, and the real-world applications of the material are far from fully realised.

In a few years, we may also be wearing it. In October 2016, a team from Tsinghua University fed mulberry leaves coated in graphene to larval silk worms. When they collected the resulting silk thread, they found it was twice as strong as normal silk, and could cope with 50% more stress before degrading.

Plus, the material can conduct heat and electricity – meaning, for instance, that we could soon be wearing solar-powered clothes that can charge our phones, or clothes that change colour.

Graphene has the potential to change almost everything we use: alongside 3D printing, it could herald a digital manufacturing revolution.

There are challenges to overcome – the biggest being it’s not yet possible to mass produce graphene – but the patience required will be worth it. Graphene’s potential to revolutionise everything from retail to construction is huge.

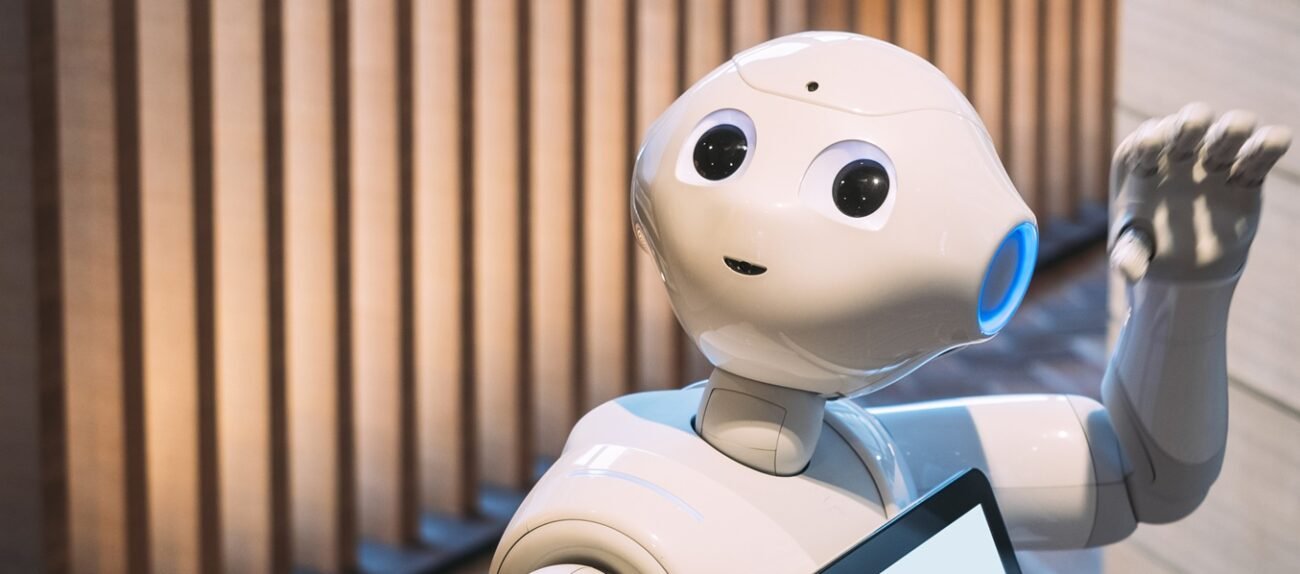

Robots

Amazon’s warehouse robots and Ocado’s logistics robotic assistants are two high-profile examples of how robots are helping to make retail more efficient.

But this is an area where evolution happens quickly, and two things are certain for robotics in the future – firstly, humanoid robots will get more realistically ‘human’ with a wider range of abilities, as AI and sensory technologies evolve. Secondly, drones will get smaller, nimbler and faster.

Engineers at the Massachusetts Institute of Technology have been working on miniaturising the computer chips in drones – no small task, given the amount of data required to fly one.

As scientists get closer to achieving this though, we can start to expect tiny, bottle-cap sized drones that will be of use in natural disasters, for instance, because the small size of the devices will allow them to squeeze through small gaps when assessing damage or searching for people.

The drones could also be used in a personal capacity: a smartphone owner will be able to take out their tiny drone, use it to film a video as it flies around them, and upload it online via their phone.

Artificial Intelligence

Ever-improving AI will fuel the connected home – including in-home assistants such as Amazon Alexa – as well as self-driving cars and robots. Plus, it’s hard to go a week without hearing about another part of the economy where jobs might be automated.

The fear surrounding job losses might yet turn out to be overhyped – previous tech-led revolutions are yet to lead to mass unemployment – but it is undoubtable that AI will change many areas of people’s lives.

Already, it can understand language – the consumer-facing technologies with natural language processing are improving all the time.

It can play games: AI has beaten humans at chess and is close to being able to beat us at poker.

It can also act as the ultimate admin assistant – trawling through thousands of pages of legal documents, for instance, looking for anomalies in a fraction of the time it takes a team of trainee lawyers to do it.

It is also getting better at understanding people’s emotions – a significant development for retailers. AI will eventually enable brands to respond in real time to an individual’s reactions to something, because it can understand people’s emotions – mood-sensing technology in stores, for instance, will pick up on whether or not someone is annoyed, knowing exactly when to lower the price or offer a deal, and predicting when someone’s about to walk out.

In July 2017, researchers at Warwick Business School even programmed an AI to recognise beauty – the idea being that if software could be programmed to value the same things as humans, city planning for wellbeing could potentially be automated.

However, much of the work in AI is being done by tech’s biggest names, meaning it is likely to be Amazon, Facebook and Google who shape the imminent future of the technology.

As a result, the biggest leaps are likely to come in the shape of AI on smartphones and in drones. Expect to see services such as real-time in-ear language translation smartphone apps, or Facebook capturing and analysing the content of videos playing on its apps.

Smartphone-based AI is in its early days, but has the biggest potential of all these technologies to make an impact on consumers in the near future.

Virtual Reality

Virtual reality’s development has so far been hampered by a lack of affordable headsets on the market, alongside the requirement for high-power computing that few consumers have access to.

But by 2020, this is likely to have started changing – Gartner predicts that consumers and businesses will have access to quality immersive devices, systems, tools and services by then, and 5G networks will be available in many markets.

While VR and AR (augmented reality) are still five to ten years from mass adoption, they are starting to encroach on how people live and key businesses are investing in and exploring the possibilities they represent.

VR has traditionally been seen as a solo activity – it conjures up images of lone gamers – but its future is resolutely communal.

In a few years, two people or more will be able to ‘hang out’ in a virtual environment, with avatars based on each person visible within the virtual world.

Information about each person’s likes and dislikes will be used to create the virtual environment, meaning every virtual world could be personalised.

Plus, several start-ups are developing technologies that bring a sensorial element to the virtual experience.

The most important part of this is introducing the feeling of movement and touch to VR – haptic bodysuits track movement using embedded sensors, and ultrasound waves are being used to mimic the feeling of touching something. It could also mean adding in a sense of smell to add to the overall experience.

The most imminent uses of this sort of fully immersive VR is in computer gaming and military simulations, but it’s also not too much of a leap to imagine a fully-shoppable virtual retail environment that enables geographically disparate friends to meet up.

Some retailers – Chinese behemoth Ali Baba and Australian department store Myer with Ebay – have already made it possible to shop and pay for things in a virtual environment.

The technology has a long way to go before it’s possible to mimic the feel of different fabrics, but as with most of these technologies, development is happening quickly.

Retailers can use VR in other ways too – many are already using it to mock up different store designs, before using emotion sensing technology to monitor shoppers as they shop the different formats and determine the most successful design.

Plus, it has a potential role in product design, as seen in Nike’s conceptual video created with start-ups Meta and Ultrahaptics, showing how VR could be used in the future.

VR may not be with us on a mass scale just yet, but once prices come down and computing power increases, it will only be a matter of time.

Via RetailWeek

English

English